Precision as a Logic Constant.

High-signal analytics require more than raw processing power. Our methodology centers on the rigorous isolation of noise from core data streams to ensure enterprise infrastructure remains resilient under load.

The Signal Isolation Framework

Data integrity is often compromised not by incorrect inputs, but by poorly defined logical boundaries. We apply a multi-tier verification process to every node within the IT infrastructure.

Our current framework operates on a zero-trust logic model, where every byte is treated as an unverified variable until the structural handshake is complete.

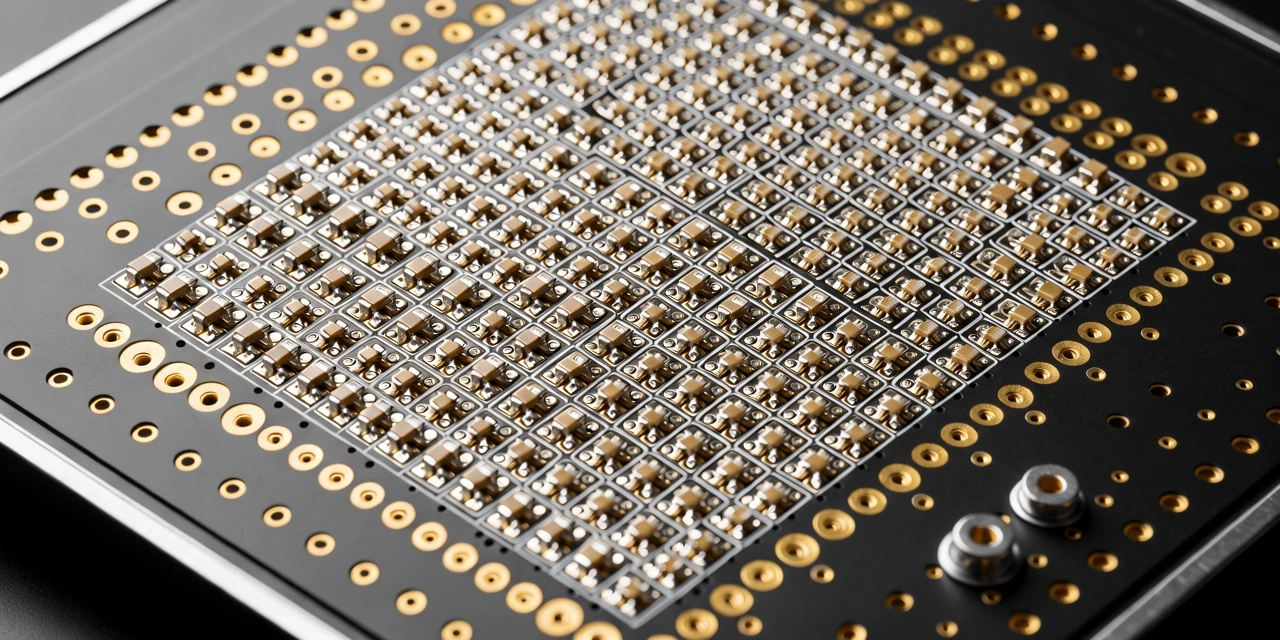

Structural Mapping

Before analysis begins, we map the underlying logic of the existing hardware. This identifies bottlenecks where data density exceeds the port's logical processing capacity.

Noise Attenuation

We deploy automated filters that strip telemetry overhead from core data packets. This increases signal clarity by up to 40% before it hits the analytics engine.

Verification Loops

Every conclusion drawn by our systems is passed through a secondary logic gate to prevent algorithmic drift. This ensures that the data stays relevant as the infrastructure scales.

Edge Synthesis

Processing occurs as close to the data source as possible. Distributed logic reduces latency and prevents the central core from becoming a single point of congestion.

Real-World Logic Application

The environments we handle range from distributed cloud nodes to highly localized financial server rooms. Regardless of the scale, our logic remains consistent: minimize the distance between the event and the insight.

- 01 Deployment of high-frequency sensing logic to monitor thermal and throughput stability.

- 02 Integration of predictive analytics that anticipate hardware failure before logical continuity is broken.

- 03 Continuous auditing of packet integrity across regional networks to ensure Malaysian standards are met.

Analytical Standards & Compliance

Our protocols are built to exceed the rigorous demands of enterprise IT. We follow a strict sequence of verification that ensures zero data loss during high-volume transfers.

Logical Audit

We examine the existing software architecture to identify logic traps that could cause recursive loops or data starvation.

Stress Validation

Systems are subjected to 3x peak load simulations to verify that the logic frameworks handle overflow without degrading signal quality.

Signal Handover

Final deployment involves a live mirror test to ensure that the transition to the new framework is achieved with zero downtime.

Why Quantitative Logic Matters

In the current enterprise landscape, data is abundant but clarity is scarce. Many organizations collect massive amounts of telemetry without a coherent logic system to interpret it. This leads to what we call "Analytical Debt"—a state where the cost of interpreting data exceeds the value of the insights produced.

Singapore Core Logic addresses this by implementing a standardized data hierarchy. We classify information based on its actionable potential, ensuring that your infrastructure focuses limited compute resources on high-signal events.

Operational Integrity

Our methodology is revised every six months to adapt to new hardware security protocols and evolving data transfer standards, maintaining a 99.99% verification accuracy across all client deployments.

Ready to refine your system logic?

Our team is available Mon-Fri: 9:00-18:00 for deep-dive methodology walkthroughs.